Software simulations have revolutionised the e-learning marketplace in less than 10 years, with each generation providing enhanced capabilities both for trainees and their organisations. But while these developments have been welcomed - and widely embraced - the technology remains dominated by clever screenshots. This is inherently problematic for two reasons:

- It destroys any hope of achieving return on investment (ROI) - due to the high cost of maintaining content when systems and processes change.

- It results in content that is at odds with the way we learn. Humans learn not just by doing, but by exploring and making mistakes, yet the vast majority of simulation technologies do not support experiential learning.

A brief history of simulations

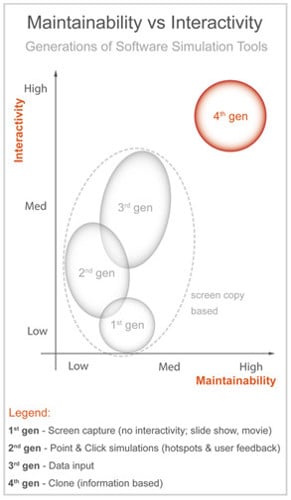

In the mid-1990s, training on large IT software environments took place in traditional classrooms with the attendant infrastructure: a training database (sandbox), and a significant challenge in organisation and technology. At the same time, software simulations were first appearing in the form of videos and PowerPoint animations, showing screenshots of the system in question. There was little interactivity, and little realism, but this first generation set of software simulations held their own in a presentation / information transfer phase of a training course.

They also paved the way for a second generation of tools that would bring in more interactivity and more pedagogy. The second generation represented a real shift from the first, offering a 'point and click' solution with basic data entry capabilities, although still based on screen captures of the source software to be simulated. With this advancement came an associated burden: a longer development process and the same old challenges of maintainability.

Still, the gain in interactivity and pedagogy meant this solution was more acceptable to a training organisation and positioned itself as a very good medium for software demonstrations. And so the birth of e-learning was heralded.

Unfortunately, with the increasing use of simulations, gaps between expectation and reality became ever clearer, necessitating the emergence of blended learning, combining classroom delivery with e-learning/simulations, as the de facto standard into which came a third generation of tools.

This third generation - simulation - added further productivity, interactivity and diversity in the training material delivered. Its main purpose was to overcome the 'demonstration' approach to teaching, and provide a richer training experience while reducing development times. Additional outputs, such as documentation and testing modes, became available.

Once again, simulations produced by this generation were still based on screen captures, and while there were undeniable gains in productivity, particularly when the various output modes are aggregated, the technology carried the same constraints. Any change to the application captured would necessitate fresh screenshot recaptures, while it was still very expensive to build content that carried the same or very similar behaviours to the production environment.

Once again, the limitation proved to be the underlying screen copy. Enter the fourth generation - software cloning.

The fourth generation - software cloning

Productivity has always been a main driver within this industry, and there are frequent public challenges where software publishers compete to create full training environments based on simulations in less than 15 minutes. There is not much to choose between the competitors, so the ability to produce material quickly cannot be seen as a differentiating factor - most products across the full range of tools have roughly the same speed ability.

The real challenge is therefore cost of maintenance and the level of fidelity. Take a typical new application rollout as an example of the challenge. For ease of illustration, let us say that we are at the point of user acceptance testing (UAT) - we will need to train some of the users for this. It would make sense to use the simulation tool for this, wouldn't it? So let's bring on one of the third generation tools, which can also produce a UAT script for system testing.

However, what percentage of changes would you expect UAT to identify for attention? Good projects result in 5 per cent changes, with a benchmark around 8 per cent, and some projects have to live with over 15 per cent changes resulting from UAT. A simple change to a screen may result in dozens of new screenshots and new instructional text being added.

Fourth generation software cloning technologies address this challenge, and that of high Fidelity, head on.

Figure 1. Maintainability vs interactivity

Figure 1. Maintainability vs interactivity

How software cloning works

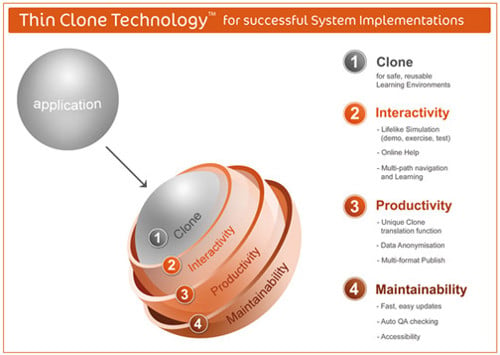

Software cloning is a totally different paradigm to screen copy. The technology is based on capturing information from the real application, storing it in a simulation file and playing it back as a clone of the original transaction.

Cloning generates fully interactive simulations without a single screen copy, and simulations can include several levels of multiple paths:

- Data can be entered in simulated screens in any order.

- Same actions can be performed in multiple ways (mouse, keyboard, shortcuts).

- Full free navigation can be obtained to simulate real application behaviour.

- Simulation capture is made in a matter of minutes, with no programming involved.

There are a number of features and benefits:

Automated quality check

An automated test facility means all simulations and tutorials can be fully tested in minutes. The test simulates the actions a trainee would perform in the simulation, thus validating that all its screens can be accessed correctly, and that all steps can be followed. This is extremely important when maintaining the training solution during the quality control process, because it allows the testing of hundreds of simulations in a few hours, without human intervention.

Integrated localisation capabilities

Simulations and tutorials do not contain images, so they can be easily translated into foreign languages. Text export facilities and dictionary management mean that you end up with one simulation, one tutorial and one dictionary, for displaying the same training material in as many languages as you need. You do not have to design and develop your training kit as many times as you have target languages, which means massive savings in development time.

Documentation generation

Transactional documentation can be generated automatically from the tutorial. It includes all screen copies of the transaction in a step-by-step approach, with pictures showing where to enter data and where to click. Documentation can be created in several languages from one simulation, tutorial and dictionary.

Publication

Simulations, tutorials, manuals, dictionaries and synopsis are packaged automatically for publishing to CD-ROM or intranet, and trainees can access all training resources from the web portal.

Fidelity and learning outcome

The Holy Grail for any system training is to eliminate errors prior to them being made in a production environment. As we have already touched on, we learn by making mistakes, and yet in the first three generations of simulation technology, it was practically impossible to allow trainees to make mistakes and learn from them.

How software cloning stacks up

So how does cloning stack up against the more advanced screenshot simulation technologies in the real world? Ever wondered how many calls go through to a help-desk and end up being investigated, only for the result to be stamped 'non-defect' - there was nothing wrong with the software, it was either user error, or the user thinking the software did something it was never designed to do. One recent analysis came up with a figure of over 80 per cent non-defect call rate. That software company does not use fourth generation software cloning, which is a pity, as the ability to explore, make mistakes and learn as a result prior to go-live might lighten the load a little.

Here are a couple of recent cases of empirical ROIs between third and fourth generation approaches. In one example, an organisation with some 240 learning objects to create in nine languages would have saved over £200,000 using internal people only on their project and over £500,000 when using external contractors, due to the productivity changes derived from the multilingual and localisation capability. This cost saving took no heed of additional benefits such as speed of creation of the translated / localised content, or the improved user experience.

In another example a telecommunications giant measured a 70 per cent reduction in calls to the help desk, having used cloning technology. Not only was this a substantial cost saving for that client in its own right, it was probably only the tip of the iceberg in true positive impact. Help-desk calls are often a last resort, long after the individual has floundered unproductively trying to find an answer to their problem on their own or - with even more detrimental impact on overall organisational efficiency - having exhausted the collective wisdom of any colleagues within reach or earshot.

In conclusion then, it seems that each generation of simulation technology has shown a quantum leap, each one moving ever closer to the ideal situation of learning in a safe production-like environment, all at an affordable cost. It looks like the current fourth generation offerings provide, if not a silver bullet solution, then something that comes pretty darn close.

Figure 2. Thin clone technology

Figure 2. Thin clone technology