With the rapid development and growing demand for the use of Artificial Intelligence (AI) there are new and serious challenges for the ethical use of these systems.

Ethical challenges and professional registration

Ethical issues are currently most visible in emerging generative AI platforms (used for creating content such as text and images). They include misinformation, intellectual property and plagiarism, bias in training data, and the ability to influence and manipulate public opinion (for example during elections).

The Post Office Horizon IT Scandal has highlighted the vital importance of independent standards of professionalism and ethics in the application, development and deployment of technology.

It is right that the UK aims to play a leading role in developing, safe, trustworthy, responsible and sustainable AI, as demonstrated by the summit in November 2023 and the associated Bletchley Declaration [1].

We argue these objectives can only be achieved when:

- AI and other high stakes technology practitioners meet shared standards of accountability, ethics and competence, as licenced professionals

- Non-technical CEOs, leadership teams and governing boards making decisions on the resourcing and development of AI in their organisations, share in that accountability and have a greater understanding of the technical and ethical issues.

Supporting ethical and safe AI

BCS paper Living with AI and emerging technologies: meeting ethical

challenges through professional standards makes several clear recommendations to support ethical and safe AI:

- Every technologist working in a high-stakes IT role, in particular AI, should be a registered professional meeting independent standards of ethical practice, accountability and competence.

- Government, industry and professional bodies should support and develop these standards together to build public trust and create the expectation of good practice.

- UK organisations should publish policies on ethical use of AI in any relevant systems for customers and employees – and those should also apply to leaders who are not technical specialists including CEOs.

- Technology professionals should expect strong and supported routes for whistleblowing and escalation when they feel they are being asked to act unethically or, for example, to deploy AI in a way that harms colleagues, customers or society.

- The UK government should aim to take a lead in and support UK organisations to set world-leading ethical standards.

- Professional bodies, such as the BCS, should support this work by seeking and publishing regular research on the challenges their members face, and by advocating for the support and guidance they need and expect.

A PDF version of this paper is available for download.

The BCS Ethics Survey 2023

BCS’ Ethics Specialist Group carried out a survey of IT professionals in the summer of 2023, to help in identifying the challenges that practitioners face [2], ahead of the AI Safety Summit. This paper outlines the key findings and suggests some actions that should happen to help address the challenges.

The online survey was sent to all UK BCS members in August 2023. It was answered by 1,304 individuals. The findings show that AI ethics is a topic that BCS members see as a priority, that many have encountered personally and which is problematic in many ways.

There is a lack of consistency in how companies deal with ethical issues in tech, with many organisations reportedly not giving any support to staff.

A summary of the findings highlights that:

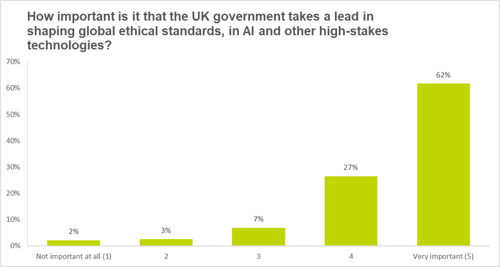

- 88% of participants believe it is important that the UK government takes a lead in shaping global ethical standards, in AI and other high-stakes technologies

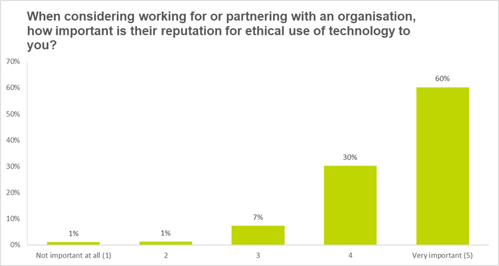

- When considering working for or partnering with an organisation, 90% stated that their reputation for ethical use of AI and other emerging technologies is important

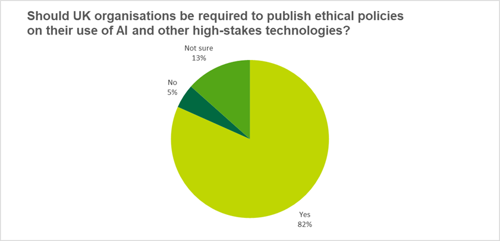

- 82% think that UK organisations should be required to publish ethical policies on their use of AI and other high-stakes technologies

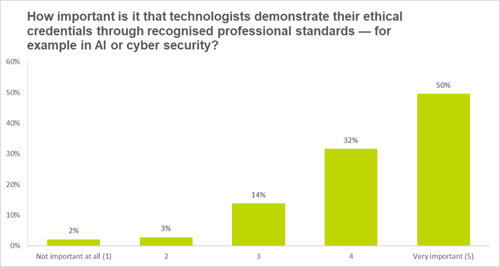

- When it came to technologists demonstrating their ethical credentials through recognised professional standards, 81% of respondents feel this is important

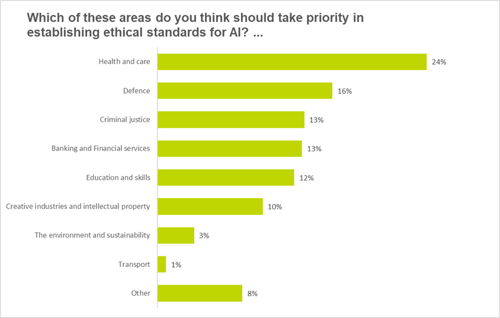

- The greatest number of respondents (24%) indicated that health and care should take priority in establishing ethical standards for AI

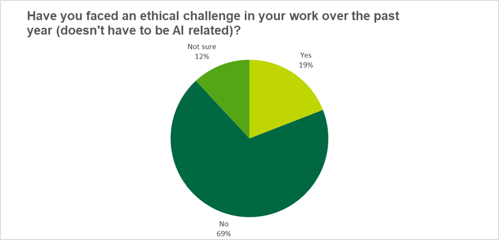

- 19% of those questioned have faced an ethical challenge in their work over the past year

- Support from employers on these issues varies, with many respondents stating they had poor or no support in dealing with ethical issues relating to technology, though there were examples of good practice

Analysis of results

Priority areas for establishing ethical standards in AI

Almost a quarter saw health and care as the key area, which is not surprising given the obvious possible ramifications of AI in surgery, diagnostics, or patient interaction. However, harmful consequences in other fields such as defence, criminal justice, banking and finance can also experience harmful consequences and need attention, according to respondents.

Ethical issues in professional IT practice

When asked whether technologists had faced ethical challenges in their work over the past year, 69% of respondents answered no.

This suggests that on an annual basis about a third of BCS members faced ethical challenges, which implies that dealing with ethical challenges is virtually unavoidable throughout a professional career, thus supporting the long-standing emphasis on including ethics in BCS-accredited training.

Interestingly, 12% of the respondents were not sure whether they had faced ethical challenges, suggesting either lack of conceptual clarity with regards to ethics or rapidly shifting concerns that do not allow for easy categorisation of ethical issues.

Organisational reputation for ethical use of AI and other high stakes technologies

The recognition of the importance of ethics and the awareness of potential harm caused by technology explains why the respondents had a very strong preference for working with organisations with a strong reputation for ethical use of technology. 90% of BCS members who responded to the survey felt that this is either very important or important. This raises challenges for organisations with regards to appropriate mechanisms that allow them to demonstrate and signal their commitment to ethical work, one of which is the publication of ethical policies.

Requirement to publish ethics policies

The high standard to which respondents hold the ethical reputation of organisations they work with was reflected by the strong support for a requirement for organisations to publish their policies on ethical use of technologies, including AI.

More than 80% of respondents supported this and only 5% opposed it outright. This position is important in that it not only supports the creation of ethical policies and their application but goes beyond this by calling for a requirement to publish them.

Importance of government leadership on AI and technology ethics

The previous question already shows that respondents feel that government has a key role to play in ensuring ethical use of critical technologies, such as AI. To further investigate the theme of government, in terms of policies and standards, we posed the question “How important is it that the UK government takes a lead in shaping global ethical standards, in AI and other high-stakes technologies?”.

88% of respondents believe it is important that the UK government takes a lead in shaping global ethical standards, in AI and other emerging technologies. This also shows the need for parties preparing manifestos ahead of the general election in 2024, to clarify positions on ethical use of technology.

This also applies to the devolved administrations and the relevant policies within their remits. Initiatives like the Centre for Data Ethics and Innovation (CDEI) are a good way of developing these polices; the CDEI’s ethics advisory board term ended in September 2023; the centre will continue to seek ‘expert views and advice in an agile way that allows us to respond to the opportunities and challenges of the ever-changing AI landscape’ [3]. In November, the UK government announced the creation of the AI Safety Institute [4].

Demonstrating ethical credentials is 'very important’

Respondents clearly saw that they face responsibilities in their role as IT professionals. This can be seen from their strong support for the acquisition of ethical credentials, for example through ethical standards in areas such as AI or cybersecurity. Only 5% of respondents saw these as not important with 81% seeing them as either important or very important.

Support for IT professionals

In our final question, we asked “How did your organisation support you in raising and managing the [ethical] issue?”.

Of those who responded, 41% said they received no support and 35% received ‘informal support’ (such as talking to their line manager/colleagues).

The survey showed examples of good practice, as for example expressed by this response:

“My employer listened to my potential ethical concern. I was supported in discussing the potential concern with our customers. Our customers and my employer agreed to put in place controls to ensure the potential ethical situation was appropriately managed.”

This shows that some organisations follow good practice, engage in ethical discussions and to develop clear policies and procedures for employees.

For you

BCS members can read the very latest F-TAG technical briefings and reports.

However, this type of good practice cannot be found everywhere. Many IT professionals have to decide on ethical challenges themselves or in small groups without the organisation’s visibility, as exemplified by the following comments:

“It did not. I was left to manage the issue myself.”

“Talked my decision through with colleagues.”

For some, even if their organisation is informed it will simply suppress or pay lip service to the issue, for instance:

“Message was received, understood....and ignored.”

One of the more worrying aspects of the results was that, of the people who received no support, 13% said they were subjected to threats of dismissal, disciplinary action or left their roles, for example:

“They reprimanded me for raising the issue and threatened my job if I did it again”.

For these professionals, to safeguard them from such threats and encourage ethical and moral behaviour, there should be clearly supported whistleblower provisions so they can safely report ethical challenges.

If an organisation does not provide sufficient support, they risk their employees resigning. As one respondent clearly said:

“Previous workplace - they did not support me, hence why they are previous”.

A PDF version of this paper is available for download.

Recommendations

The IT professionals represented here are just one stakeholder group affected by ethics in technologies like AI. They form part of the larger AI ecosystem. The survey highlighted several points that call for interventions by different parts of that network. Our detailed recommendations are divided accordingly.

Recommendations for UK Policymakers

The survey shows that tech professionals see a crucial role for the UK government in ensuring that the UK AI and IT ecosystem is a responsible one, and sets a world standard.

Ways of ensuring that this becomes the case include:

- Adopting a leadership position in global ethical governance of AI and IT

- Legislating for the development of appropriate standards and certification that incorporate ethical aspects of AI and IT.

- Strengthening mechanisms that require organisations to report their ethical policy on use of critical technologies like AI, with accountability across senior leadership teams and governing boards, as well as senior technologists.

- Recognising the systems nature of AI and considering mechanisms to shape accountability across the UK AI ecosystem; for example by

- strengthening professional accreditation

- funding relevant training and research pathways

Recommendations for industry

The IT and AI industry plays a crucial role in the AI ecosystem, and is the home of world-leading research as well as thought leadership on the deployment of AI. Yet industry does not consistently support IT professionals in dealing with ethical challenges.

The following policy changes can help address this, if broadly supported:

- Ensuring there are policies and protected, well-publicised channels within the organisation to raise and deal with ethical challenges.

- Publishing ethical policies for the benefit of organisational members but also the wider ecosystem.

- Recognising the importance of IT professionals in achieving the goal of creating a responsible AI ecosystem [5] and considering professional status and accreditation in hiring and promotion decisions.

Recommendations for professional bodies

Professional bodies can play a major role in the AI ecosystem, because the standards and guidance they provide to members from accountancy to law, will increasingly need to take account of AI. As such they can give independent, sector-specific evidence to government and industry.

Ways in which they can provide support are:

- Providing professional registration to recognise competence in developing and managing responsible, ethical AI systems.

- Supporting ongoing training and accreditation options to give professionals the knowledge and credentials they need to tackle ethical challenges.

- Shaping industry wide standards and guidance for organisations to adapt into their own policies and use to support their employees.

- Working with decision makers who are not tech professionals (for example policy advisers, elected politicians, CEOs, governing boards) to raise the quality of understanding of ethical and technical challenges around AI and other high stakes technologies.

- Highlighting the ethical use of AI and other emerging technologies as a core requirement of their Codes of Conduct and supporting whistleblowing mechanisms for members.

- Adopting and learning from established best practice elsewhere, such as the new EU ICT Ethics - European Professional Ethics Framework for the ICT Profession [6], and sharing that across the UK and wider.

Recommendations for IT professionals

Individual IT professionals play a crucial role in translating general ethical insights into technical and organisational realities. To play this role successfully, IT professionals should be:

- Ensuring their professional development includes current ethical concerns.

- Strengthening specialist knowledge through appropriate accreditation and certification on ethical concerns.

- Contributing to the development of ethical standards and good practices.

- Supporting greater ethical and technical understanding of AI and other technologies among colleagues across their organisations and client-base, supported by their professional bodies.

- Using their subject expertise to engage with the broader public on issues of ethics of AI and digital technologies.

These recommendations will only be successful if they move the whole UK AI and broader IT ecosystem towards the recognition that ethics is not a static topic. Instead, it is one that requires continuous critical reflection and engagement.

The Post Office Horizon IT scandal illustrates the dangers to users, organisations and government of technology being misunderstood or misrepresented, and of failure to address ethical issues early.

Professional bodies such as BCS have a crucial role to play in this context. BCS will therefore work with its members, other professional bodies and stakeholders to explore the development of standards, certification mechanisms and training curricula. BCS will also work with government and industry to ensure that the benefits of AI and future technologies can be reaped while ethical issues and concerns are properly addressed.

Further Reading

- The Bletchley Declaration by Countries Attending the AI Safety Summit, 1-2 November 2023

- “PM should make ethics a priority at AI Safety Summit, say tech professionals” - BCS ethics survey August 2023

- Championing Responsible Innovation: Reflections from the CDEI advisory board (September 2023)

- Introducing the AI Safety Institute (November 2023)

- “What is Responsible Computing?” – Harvard Business Review July 2023

- EU ICT Ethics (2023)

This article was prepared by Professor Bernd Stahl FBCS; Adem Certel; Dr Neil Gordon and Gillian Arnold FBCS for BCS’ Ethics Specialist Group, and supported by BCS’ Fellows Technical Advisory Group (F-TAG).